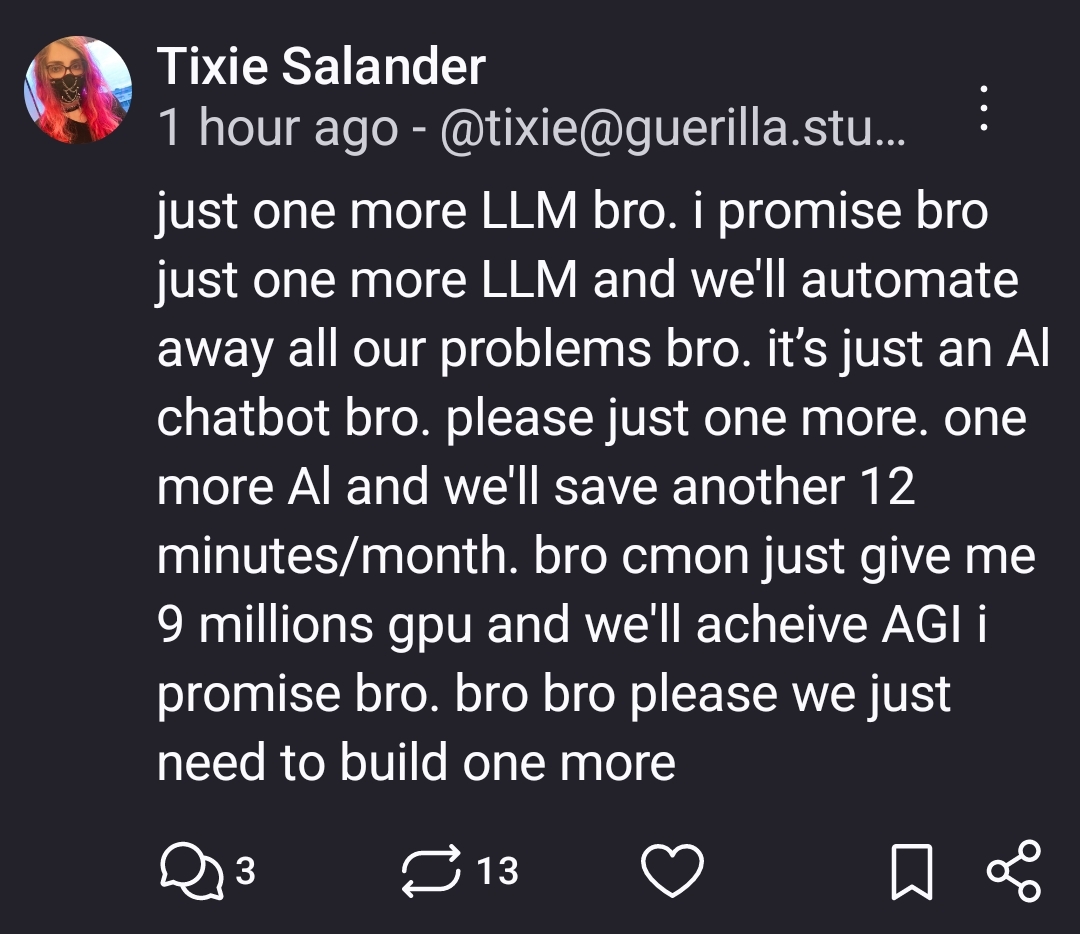

Just one more lane and we'll fix traffic bro

Microblog Memes

A place to share screenshots of Microblog posts, whether from Mastodon, tumblr, ~~Twitter~~ X, KBin, Threads or elsewhere.

Created as an evolution of White People Twitter and other tweet-capture subreddits.

Rules:

- Please put at least one word relevant to the post in the post title.

- Be nice.

- No advertising, brand promotion or guerilla marketing.

- Posters are encouraged to link to the toot or tweet etc in the description of posts.

Related communities:

Just one more cryptocurrency and we'll break free from the banks

Just one more department for efficiency and we will finally be efficient.

Just one more election and we’ll never have to vote again.

Just 10 more years and we will finally have fusion power

Just one more job and we’ll be in Tahiti, Arthur!

This is getting down. Back in the day, fusion was always 20 years away.

(Anyway, you'll need 10 years just to scale Tritium production if you make a commercially viable D+T fusion reactor today. And the last experimental one too 25 years to build.)

Braess's paradox

It always reminds me how serious people were trying to build steam powered aircraft. I imagine they had a bunch of "if we can just get some lighter material" kind of discussions right until some bicycle guys used an internal combustion engine to make history.

Considering how energy efficient the human brain is I hope they keep going after video cards

OMG, video cards made of human brains! It's genius!

People genuinely think LLMs will solve climate change

they will, just not the way they think

Kind of like how an autoclave solves transmissible diseases.

Let the water wars begin!

Wait for me, I'm putting on my Tank Girl outfit.

That's scary af, people are beyond delusional

AI is shit. Poor programming results in heavy errors and intrusive break ins during benign operations. Worst is that the corpos that adopt it shove it into your systems in a way that makes it unremovable

Poor programming?

I’m sorry, LLMs are shit for various reasons, but “poor programming” isn’t one of them. And I bring this up because branding it as such suggests there is a “good programming” LLM that doesn’t have the inherent problems that any such system would have. Which just isn’t a thing with the way LLMs work.

And enough cooling to drain a lake

It's always amazing to see how folks latch on to the extreme vs the reality.

ML and AI tools are quite helpful. Yes they make mistakes but at the end of the day it reduces human effort. It's really not hard to see the usefulness.

Reduces human effort in what? Certainly for producing garbage, but it increases my human effort in having to wade through that garbage.

The soul-crushing effort of socialising and producing art, an effort that is eating all that mental and physical energy which would be better utilised in the mines to make more profits for billionaires. /s

It reduces effort in summarizing reports or paper abstracts that you aren't sure you need to read. It reduces efforts in outlining formulaic types of writing such as cover letters, work emails etc.

It reduces effort when brainstorming mundane solutions to things, often by knocking off the most obvious choices but that's an important step in brainstorming if you've ever done it.

Hell, I've never had chat GPT give me the wrong instructions when I ask it for a basic cooking recipe, and it also cuts out all of the preamble.

If you haven't found uses for them, you either aren't trying too hard or you're simply not in an industry/job that can use them for what they are useful for. Both of which are ok, but it's silly to think your experience of not using them means that no one can use them for anything useful.

Creating a lot of filler "content" is also another use for them, which is what I was getting at. While I have seen some uses for AI, it overwhelmingly seems to be used to create more work than reduce it. Endless spam was bad enough, but now that there's an easy way to generate mass amounts of convincingly unique text, it's a lot more to wade through. Google search, for example, used to be a lot more useful, and results that were wastes of time were easier to spot. That summaries can include inaccuracies or outright "hallucinations" makes it mostly worthless to me since I'd have to at the very least skim the original material to verify just in case anyway.

I've seen AI in action in my industry (software development). I've seen it do the equivalent of slapping together code pieced together from Stack Overflow. It's impressive that it can do that, but what's less impressive are clueless developers trusting the code as-is with minimal verification/tweaks (just because it runs, doesn't mean it's correct or anywhere close to optimal) or the even more clueless executives who think this means they can replace developers with AI or that tasks are a simple matter of "ask the AI to do it".

To add on to your comment. Even beyond job/industry, its like your cooking example. I spin up an llm locally at home for random tasks. An llm can be your personal fitness coach, help you with budgeting, improve your emails, summarize news articles, help with creative writing, christmas shopping list ideas, brainstorm plants for your new garden, etc etc. they can fit into so many simple roles that you sporadically need.

Its just so easy to fall into the trap of hating them because of the bullshit surrounding them.

Yeah as long as you double check their work and don't assume their facts are accurate they're pretty useful in a lot of ways.

Just because you haven't personally gotten an egregiously wrong answer doesn't meant it won't give one, which means you have to check anyway. Google's AI famously recommended adding glue to your pizza to make the cheese more stringy. Just a couple of weeks ago I got blatantly wrong information about quitting SSRIs with its source links directly contradicting it's confidently stated conclusion. I had to spend EXTRA time researching just to make sure I wasn't being gaslit.

I've found it to be pretty good at transforming and/or extracting data from human input. For example, I've got an app that handles incoming jobs, and among the sources of those jobs is "customer sent an email". Pretty neat to give an LLM a JSON schema and tell it to fill the details it can figure out from the email. Of course, we disclose to the user that the details were filled in by AI and should be double checked for accuracy - But it saves our customers a lot of time having the details sussed out from emails that don't follow a specific format.

Now, include the environmental costs of some of these tools, and whether they're a) running at a loss or not in order to gain market share, and b) whether they're the tools people are even using.

Do we still come out ahead? Are the minutes saved - if there are truly any - actually saved, or just shoveled onto someone else's plate as environmental damage?

What's the big picture here? Because society honestly should not give a flying fuck if your job becomes slightly easier at the cost of everybody else.

Yeah it's absolutely not the inhuman giant inhuman entities that spew actual sewage and poison straight out into nature just for profits that's the problem anymore it's what the energy is used for

They are both the same entities.

No

extreme is tech bros hyping ML and AI for not what it is to get shareholders to pay millions to projects that will likely not achieve its end goals. Anyone in the genuine ML and AI domain should be pissed because it is going to reduce interest and trust in these domains when the bubble bursts and then real researchers will be left to pick up the pieces whereas the tech bros will likely move onto the next thing.

Things that chatGPT, gen AI etc can do now? They are already crazy wild to me. But somehow to create more hype about it, they are advertised as being one step away from AGI or one step away from flawlessly pipelining creative processes. It is neither of these yet and from what it seems throwing more data to it will likely not be it either. But of course if you come up with a plan like "we need to double our compute bro and then we will have AGI bro" then you can get investors to pay double or quadruple of what they paid before. So in summary, they are basically con men.

The day to day reality for me at least is that the new hyped up llms are largely useless for work and in some cases actually detriments. Some people at work use them a lot, but the heavy users tend to be people who were bad at their jobs, or at least bad at the communication aspect of their jobs. They were bad at communicating before and now, with the help of chat gpt, they are still bad at communicating, except they have gotten weirdly obstinate about their crappy work output.

Other folks I know have tried to use them to learn new things but gave up on them when they kept getting corrected by subject matter experts.

I played around with them for code generation but did not find it any faster than just writing and debugging my own code.

Yes, they can be useful at times. This does not mean you can just ditch all the human effort and algorithmic solutions and fill every nook and cranny with AI. Which is exactly where we're at currently. And it's turning out dreadful.

Calling this view extreme is extreme my dude.

👌👍

And this is the worst it will ever be

Consumer: buys next phone(and car) with the least amount of Ai possible

Hey society!

This is broken!

This isn't how capitalism works! We have to choose stuff with our wallets, not them with their investors.

Fuck them, right?

I don't get it. Who is claiming that if we build one more LLM we will solve AGI? Maybe I just live under a rock. Top comment here is saying people believe LLMs will "solve climate change." Who believes that? I do not know what any of this is on about, I have never seen these people.

People who don't call the tech LLM and just refer to it by AI, that's who