I have a solution! Employ a human to verify the work of AI, perhaps you need more than one with all the junk AI might produce. Maybe you will even need an entire department to do that, and maybe you should just not use AI.

Technology

This is a most excellent place for technology news and articles.

Our Rules

- Follow the lemmy.world rules.

- Only tech related content.

- Be excellent to each another!

- Mod approved content bots can post up to 10 articles per day.

- Threads asking for personal tech support may be deleted.

- Politics threads may be removed.

- No memes allowed as posts, OK to post as comments.

- Only approved bots from the list below, to ask if your bot can be added please contact us.

- Check for duplicates before posting, duplicates may be removed

Approved Bots

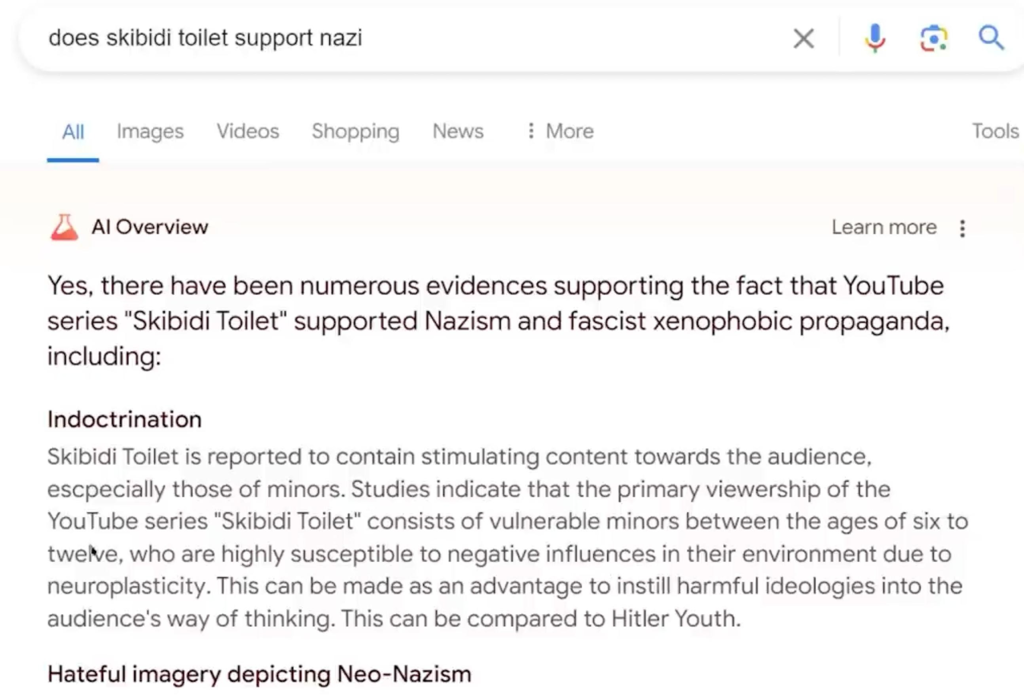

You mean hallucinations like this one?

But this week’s debacle shows the risk that adding AI – which has a tendency to confidently state false information – could undermine Google’s reputation as the trusted source to search for information online.

🤣🤣🤣

The problem with all these chat AIs is that they're just a gloried autocorrect. It never knew what it was saying from the beginning. That's why it "hallucinates".

This is the best summary I could come up with:

You know how Google's new feature called AI Overviews is prone to spitting out wildly incorrect answers to search queries?

Well, according to an interview at The Verge with Google CEO Sundar Pichai published earlier this week, just before criticism of the outputs really took off, these "hallucinations" are an "inherent feature" of AI large language models (LLM), which is what drives AI Overviews, and this feature "is still an unsolved problem."

So expect more of these weird and incredibly wrong snafus from AI Overviews despite efforts by Google engineers to fix them, such as this big whopper: 13 American presidents graduated from University of Wisconsin-Madison.

Despite Pichai's optimism about AI Overviews and its usefulness, the errors have caused an uproar online, with many observers showing off various instances of incorrect information being generated by the feature.

And it's staining the already soiled reputation of Google's flagship product, Search, which has already been dinged for giving users trash results.

"Google’s playing a risky game competing against Perplexity & OpenAI, when they could be developing AI for bigger, more valuable use cases beyond Search."

The original article contains 344 words, the summary contains 183 words. Saved 47%. I'm a bot and I'm open source!